Enterprise data services are the operating system for every modern business – the framework that decides how fast your teams can answer a question, how defensible your compliance posture is, and how ready you are for agentic AI. This guide breaks down what EDS actually covers in 2026, the frameworks that work, a 5-level maturity model to score yourself, and a phased roadmap that gets you to production without a two-year transformation program.

The enterprise data services market is not just growing – it is consolidating around a new architectural baseline. Fortune Business Insights values the market at $111.28 billion in 2025, projected to hit $294.99 billion by 2034 at an 11.5% CAGR. But the spend is already happening. What separates the organizations getting ROI from those stuck in pilot purgatory is not budget. It is sequencing: governance before pipelines, ownership before ingestion, and architecture matched to maturity rather than trend.

This guide replaces the generic “what is EDS” framing with something closer to how practitioners actually build it. We cover the seven core components, the three frameworks enterprises actually use (TOGAF, DAMA-DMBOK, Zachman), a 5-level maturity model, an implementation roadmap with realistic timelines, the architecture patterns that work for different org structures, and the anti-patterns that stall most programs. We also cover how Peliqan collapses the EDS stack for mid-market and service-provider teams that do not have 18 months and a six-figure consulting budget.

What enterprise data services actually mean in 2026

Enterprise data services (EDS) refers to the coordinated set of technologies, processes, and governance frameworks that collect, store, integrate, secure, govern, and activate data across the entire organization. It is not a product category – it is a capability model. The DAMA Data Management Body of Knowledge defines it as the combination of governance, quality, integration, and stewardship disciplines working together to make data trustworthy and usable at scale.

The reason this definition matters: most vendors sell a slice of EDS (a warehouse, a catalog, an ETL tool) and position it as the whole. Buying the slice without the rest is how organizations end up with three warehouses, two catalogs, and zero trusted metrics. The discipline sits above the tools.

EDS vs adjacent terms that get conflated

The seven core components of enterprise data services

Every serious EDS implementation covers the same seven functions. The technology stack differs; the capabilities do not. A gap in any one of these is where your program fails an audit, misses an SLA, or blocks an AI use case.

1. Data integration and movement

Connecting hundreds of sources (SaaS apps, databases, files, event streams, APIs) into a unified environment. The 2026 baseline includes ELT with CDC-based incremental loading, event streaming for sub-minute latency, and reverse ETL to push enriched data back into operational tools. ETL is not dead – it is just one option in a portfolio.

2. Storage and compute separation

Centralized, scalable storage that decouples from compute. The practical test: can you add users or scale an analytical workload without rearchitecting storage? Modern cloud data warehouses, lakehouses, and object-based architectures like Apache Iceberg all pass this test. On-prem Teradata and legacy appliances do not.

3. Governance, lineage, and stewardship

Policies, processes, and automation that enforce quality, privacy, compliance, and lineage. The difference between governance that works and governance that becomes shelfware is whether controls are embedded in daily workflows or live in a separate “governance tool” nobody opens. Active metadata platforms push policies into the tools where teams already work.

4. Transformation and semantic modeling

The logic layer where raw tables become business-ready metrics. SQL-based transformations (dbt-style), low-code Python, and semantic models that define KPIs once and reuse them everywhere. Without this layer you get metric drift: three dashboards, three revenue numbers, zero trust.

5. Security and access control

Encryption, role-based and attribute-based access control, audit logs, and compliance mapping. The 2026 baseline is zero-trust: per-query access validation, continuous monitoring, and attribute-based controls that can enforce “only show rows where region = EU” without a separate view for every policy combination.

6. Activation and consumption

How data reaches its consumers. Dashboards are one channel – embedded analytics, published APIs, AI agents, operational sync-backs, and automated distribution to Slack, Teams, and email are the rest. If 80% of your consumption is still dashboards in 2026, activation is your gap.

7. Observability and data ops

Monitoring pipeline health, freshness SLAs, schema drift, and anomaly detection. The signal that you have this right: incidents get caught before downstream consumers do. The signal that you don’t: your finance team is your data quality alerting system.

Three frameworks that enterprises actually use

You will not pick one framework and run it end-to-end. Mature organizations borrow from all three depending on the conversation – TOGAF for executive buy-in, DAMA-DMBOK for discipline definitions, Zachman for completeness checking. Understand what each is good for, then stop debating and start using them.

The 5-level EDS maturity model

Score yourself honestly against these five levels before you pick tools or patterns. Most organizations sit at Level 2 or 3 and waste budget trying to implement Level 4 patterns. The right move at Level 1 is foundation, not fabric.

Level 1 – Ad hoc

Level 2 – Reactive

Level 3 – Managed

Level 4 – Proactive

Level 5 – Optimized

Architecture patterns: matching the pattern to your maturity

Four architecture patterns dominate EDS conversations in 2026. The mistake most teams make is adopting the pattern that is trending (data mesh in 2023, data fabric in 2024, lakehouse in 2025) rather than the one that fits their maturity level and org structure. Here is when each actually works.

Anti-patterns that stall EDS programs

- Dashboard-first thinking: Building visualization layers before semantic models. You get 200 dashboards and no trusted metrics.

- Tool-first thinking: Buying a platform before defining use cases or ownership. Gartner notes 80% of data and analytics governance initiatives fail by 2027 due to lack of a real crisis to drive change.

- Governance in a silo: Standing up a governance team that produces policies nobody reads. Governance must live in the tools practitioners already use.

- Mesh at Level 2: Adopting a data mesh architecture without the platform maturity to support it. You decentralize problems rather than solve them.

- Consulting-led everything: Architecture slides without operational runbooks. Six months in you have a strategy document and zero pipelines.

Market sizing and why spending is accelerating

The enterprise data services market is large, growing, and concentrated. A few numbers that matter for the 2026 business case:

- Global market value (2025): $111.28 billion (Fortune Business Insights)

- Projected value (2034): $294.99 billion at 11.5% CAGR

- Enterprise AI market by 2030: $150-200 billion, 30%+ CAGR

- Generative AI investment by 2032: $1.3 trillion projected

- Large enterprise share: ~61% of total EDS spending

- North America share: 30-34% of global market

- Services growth (consulting, managed): 12% CAGR, outpacing software

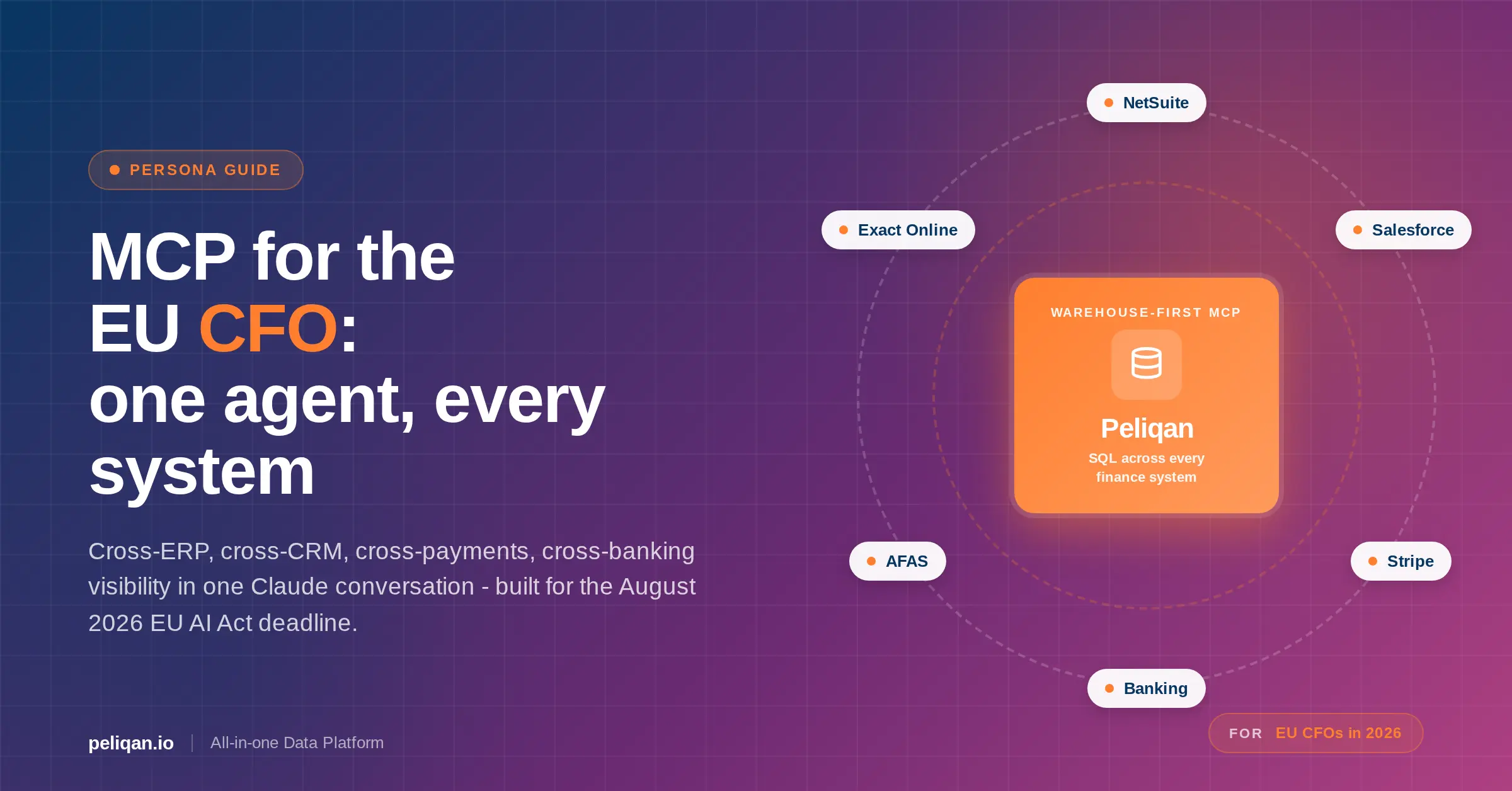

The growth is driven by three forces converging: AI workloads need governed data at scale, regulatory pressure continues to increase (GDPR, CCPA, EU AI Act, BCBS 239), and hybrid multi-cloud has become the default rather than the exception. According to Bloomberg’s 2025 Enterprise Data & Tech Summit insights, financial institutions are rebuilding data infrastructure around interoperable cloud platforms and governed AI pipelines as a competitive baseline rather than a differentiator.

Use cases by industry – what actually gets built

Generic use case lists do not help anyone choose. Here are the EDS patterns that show up repeatedly in 2026 implementations, by industry, with the specific capability combinations that drive them.

Financial services

Customer 360 for cross-sell and risk, real-time fraud detection on streaming data, BCBS 239 compliance reporting, and KYC/AML automation with lineage. The defining requirement: regulatory-grade lineage that can prove where every number in a report came from, across on-prem and cloud. Data lineage that only covers one system is not enough.

Healthcare and life sciences

Patient data integration across EHR Software, lab systems, and claims, predictive analytics for resource allocation, and HIPAA/GDPR-compliant research data platforms. The hard problem is consent management and de-identification at scale – not the integration itself.

Manufacturing

IoT telemetry aggregation for predictive maintenance, supply chain visibility across tiers of suppliers, and quality control that correlates line data with batch outcomes. Edge-to-cloud pipelines with sub-minute latency are becoming the 2026 baseline.

Retail and e-commerce

Omnichannel customer data platform with unified profiles, real-time personalization via reverse ETL into CDPs and marketing tools, and inventory optimization across stores, warehouses, and fulfillment partners. Retailers using AI at scale are realizing ROI up to six times faster than late adopters, according to Cloudera’s 2026 industry research.

SaaS and service providers

Multi-tenant embedded analytics for customers, white-label data warehouses, and consolidated operational dashboards across product telemetry, billing, and support. The twist for SaaS: your EDS platform often becomes a revenue product, not just infrastructure.

A phased implementation roadmap with realistic timelines

A full EDS modernization across all seven components typically takes 12-24 months with ongoing optimization thereafter. Initial value should land in 3-6 months through phased delivery. If your consultants are quoting 18 months to first dashboard, something is wrong.

Phase 1 – Foundation (Months 1-3)

Pick your top 5-8 data sources by business criticality (not technical novelty). Centralize ingestion via managed connectors with CDC where available. Stand up a warehouse or lakehouse with basic role-based access. Define the first 10-15 canonical metrics and build a thin semantic layer. Ship three dashboards that leadership actually uses.

Phase 2 – Governance and quality (Months 3-6)

Add observability and data quality monitoring to every production pipeline. Define data owners per domain. Stand up a catalog and populate it with the critical 20% of tables that drive 80% of consumption. Map compliance controls (GDPR, SOC 2, industry-specific) to technical enforcement.

Phase 3 – Activation and self-service (Months 6-12)

Expand from 5-8 sources to 30+. Add reverse ETL for the top three operational systems (CRM, marketing automation, support). Enable self-service for business teams with curated datasets and guardrails. Start building data APIs and embedded analytics where product teams need them.

Phase 4 – Scale and intelligence (Months 12-24)

Move to domain-driven ownership. Put ML models in production with monitoring. Deploy AI agents that act on governed data. Automate compliance reporting. Measure data products by usage and business impact, and sunset the ones that no longer earn their keep.

ROI: what the numbers actually look like

The EDS business case is real, but the published numbers span a huge range. Here is what holds up in practice, with the caveat that your starting point matters more than any benchmark.

Two things to note. First, labor savings are the most reliable category – you can measure hours saved. Revenue uplift is harder and gets disputed in finance reviews. Lead with savings. Second, these ranges assume competent execution. A program that stalls at Phase 1 realizes maybe 20% of the range.

The leading EDS provider landscape in 2026

The EDS market has consolidated into a few clear categories. No single vendor covers everything; the question is which combination fits your maturity, budget, and operating model.

Peliqan: a consolidated EDS platform for teams that need to ship this quarter

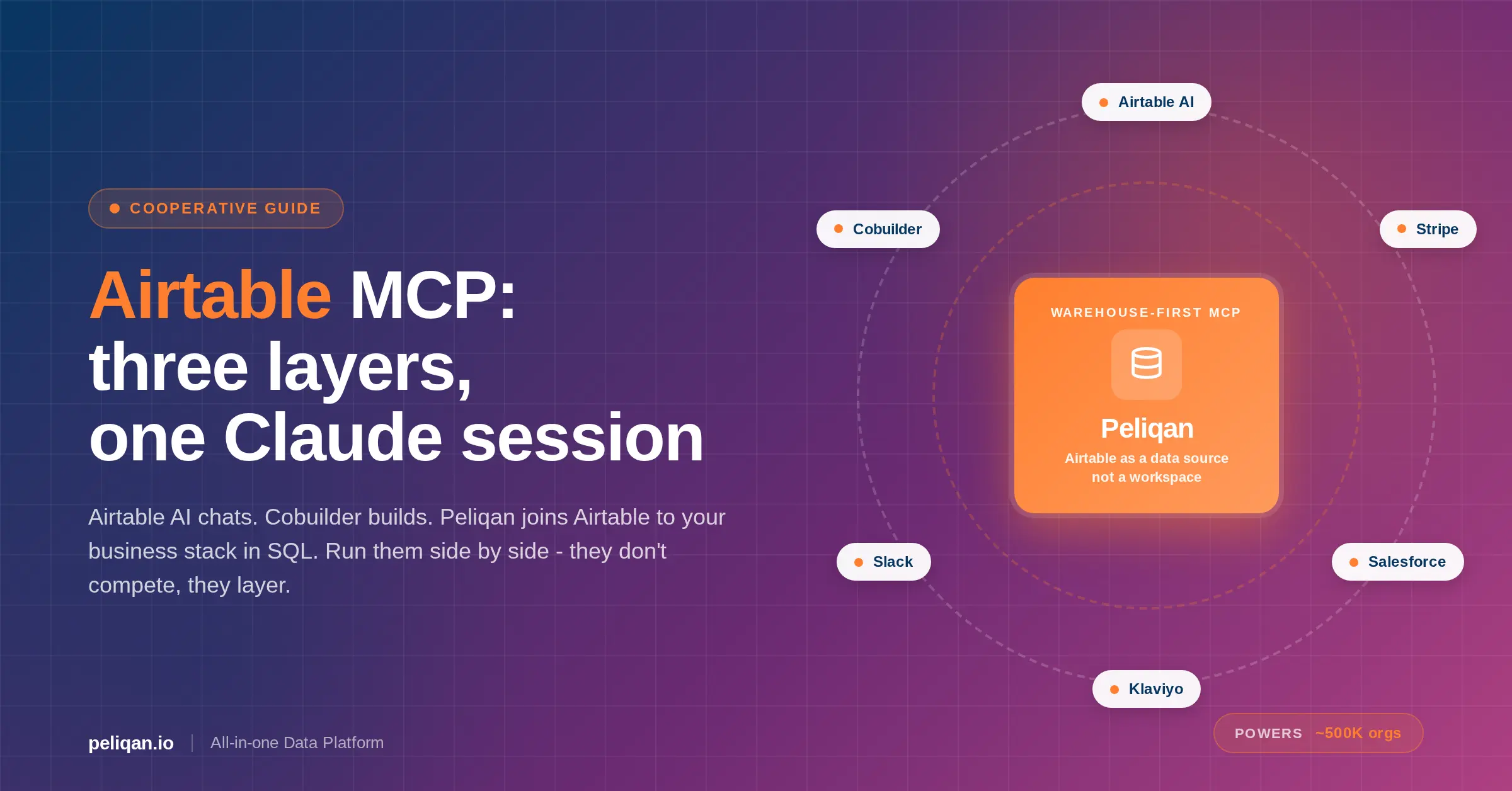

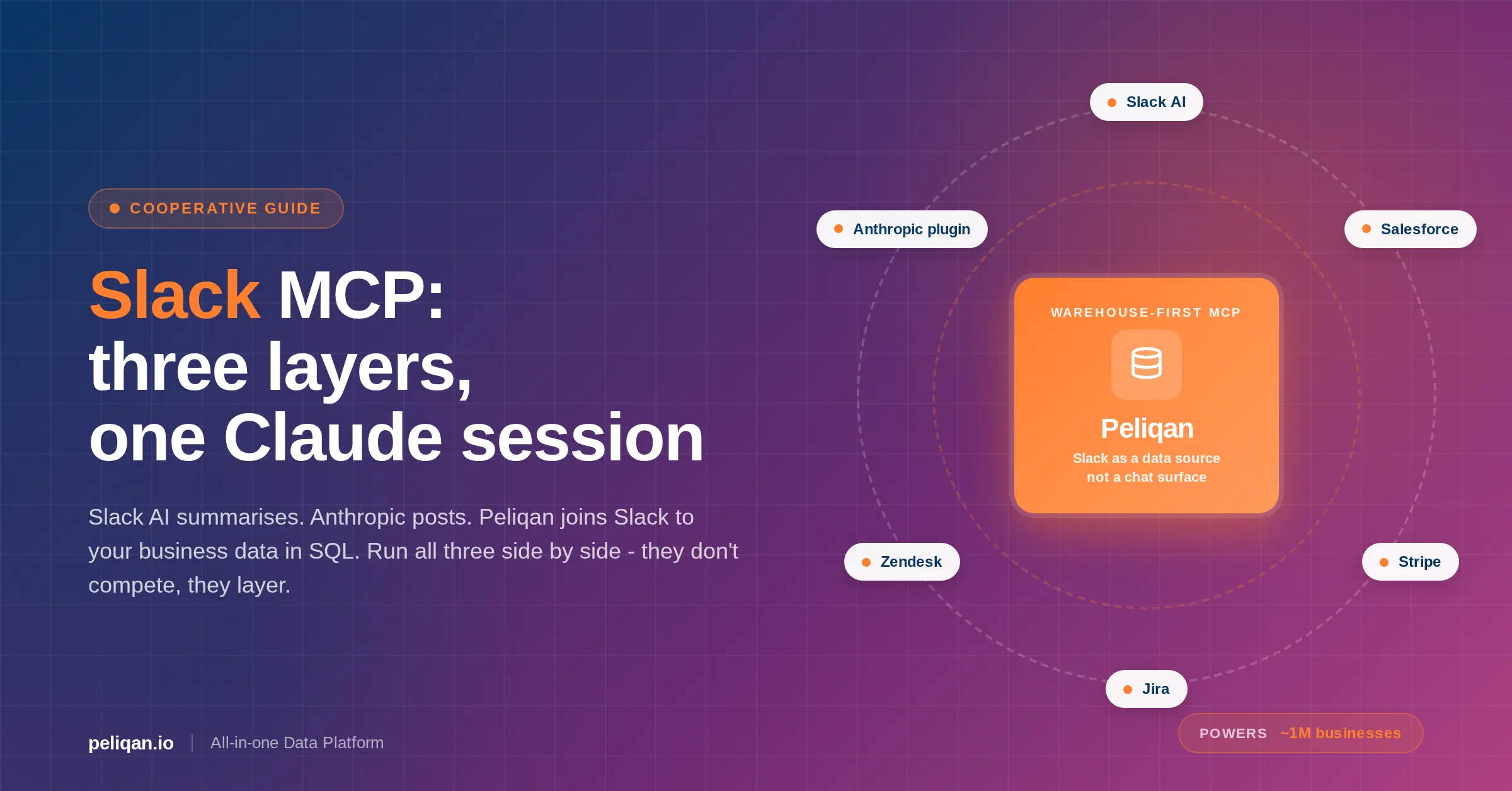

Most EDS stacks assemble 6-10 tools: an ELT platform, a warehouse, a transformation tool, a catalog, an observability platform, a reverse ETL tool, a BI layer, and an orchestrator. Every tool is a contract, an integration point, and a failure mode. Peliqan collapses most of that stack into a single platform designed for teams that do not have a 10-person data engineering org.

What Peliqan covers out of the box

The pricing model matters as much as the features. Peliqan runs from ~$199/month with fixed pricing, not compute-based billing that surprises you at quarter close. For teams at Level 1-3 maturity building their first real EDS capability, or service providers delivering data services to multiple clients, this is the profile that fits.

Real-world example: CIC Hospitality

CIC Hospitality unified data from 50+ sources into a single data platform and now saves 40+ hours per month by fully automating board reports. The combination of connectors, warehouse, and scheduled distribution replaced what would otherwise be a multi-tool stack plus manual assembly work. Read the full case study.

Service providers building consolidated data operations for clients – ERP consultancies, finance consultancies, and SaaS vendors embedding analytics – see similar consolidation benefits. Rezolv, an ERP consultancy, uses Peliqan to streamline complex ERP migrations and transformations for multiple clients from a single platform, replacing what used to be bespoke pipelines per engagement.

The 2026-2027 trends that will reshape EDS

Three shifts are moving from trend deck to production reality over the next 24 months. None of them require waiting – the gap between early adopters and the rest is about to widen.

Agentic AI in operational workflows

AI agents stop being demos and start handling real workflows: reconciliation, anomaly investigation, routine customer service, and compliance reporting. Cloudera’s 2026 research indicates AI agents will become part of operational workflows this year, not next. The EDS requirement: agents need governed data with clear permissions, observability into what they accessed, and audit trails – otherwise you are running unsupervised automation on sensitive data.

Making data AI-ready – cleaned, contextualized, and permission-scoped before agents touch it – is now a prerequisite rather than a nice-to-have, and it is where most agentic AI rollouts quietly fail.

Unified control planes over hybrid infrastructure

After a decade of cloud migration, most enterprises ended up multi-cloud plus on-prem by default. The 2026 answer is a unified control plane that manages data services across wherever data lives, rather than forcing consolidation onto one cloud. Bloomberg’s 2025 summit insights confirm this is now the preferred architecture in financial services rather than a compromise.

Open table formats and data sovereignty

Apache Iceberg, Delta Lake, and Hudi are moving from niche to default for analytical storage. The driver is not performance – it is portability. Proprietary warehouse formats create lock-in; open formats let you swap compute engines without rewriting data. Combined with growing data sovereignty requirements (EU AI Act, country-specific residency rules), open formats plus regional deployment become a strategic hedge, not just a technical choice.

A decision framework for getting started

Pick your next move based on where you are

- Level 1-2, small team, mid-market: A consolidated platform (Peliqan or similar) gets you to Level 3 in 6-12 months without assembling 8 tools. Do not start with a data mesh reading list.

- Level 3, multi-BU, platform team of 3-8: Invest in semantic layer and catalog next. The tooling you have is probably adequate; the missing capability is shared metric definitions.

- Level 3-4, large enterprise, budget approved: Start the hub-and-spoke conversation. Pilot data-as-a-product in one domain before declaring a mesh strategy enterprise-wide.

- Level 4-5, ready for AI at scale: Focus on active metadata, agent-ready access controls, and data product economics. You do not need new tools; you need to mature the ones you have.

- Stuck at any level: The blocker is almost always governance or ownership, not technology. Budget for a quarter of organizational work before the next tool purchase.

Conclusion: treat EDS as an operating capability, not a project

Enterprise data services are not a project with an end date. They are the operating layer that determines how fast your organization can answer questions, enforce policy, activate data, and absorb AI. The organizations winning in 2026 are not the ones with the most sophisticated architecture diagrams. They are the ones that sequenced correctly: governance before pipelines, ownership before ingestion, semantic layer before self-service, and maturity-appropriate patterns over trend-chasing.

Start where you actually are, pick the two or three next-step priorities that match your maturity level, and measure progress against outcomes rather than output. A program that ships three reliable data products in 12 months beats a strategy deck covering 47 capabilities that never leaves PowerPoint.

If you are building or consolidating your EDS stack and want a platform that covers integration, warehousing, transformation, reverse ETL, and activation in one environment – with fixed pricing and a 48-hour custom connector SLA – request a demo or try Peliqan for free.