An ETL pipeline moves data from where it lives to where it gets used – and gets the format right along the way. The concept is simple. Building one that runs reliably, recovers cleanly when something breaks, and scales past a few sources is where most data teams hit a wall. This guide covers how modern ETL pipelines work in 2026, the architecture choices that matter, the code patterns that separate fragile pipelines from production-grade ones, and where AI is actually changing the game versus where it’s still hype.

What is an ETL pipeline?

An ETL pipeline is an automated workflow that pulls data out of source systems (extract), reshapes it into a consistent format (transform), and writes it into a target store like a warehouse or lake (load). The output is analysis-ready data that downstream teams – BI, finance, ops, AI agents – can trust.

The concept dates back to the 1970s. What’s changed since 2020 is the location of the heavy lifting. Cloud warehouses like Snowflake, BigQuery, and Postgres-on-cloud are now powerful enough to do most of the transformation work themselves, which has pushed many teams toward ELT (extract, load, transform). The mechanics of an ETL process are still the foundation, but the boundaries between ETL, ELT, and streaming pipelines have blurred. A well-designed modern pipeline often borrows from all three.

ETL pipeline vs data pipeline – the difference in one line

A data pipeline is any system that moves data from A to B. An ETL pipeline is a data pipeline with a specific shape: it extracts, transforms, and loads. Every ETL pipeline is a data pipeline; not every data pipeline is ETL. A Kafka stream replicating events without any transformation is a data pipeline but not an ETL pipeline.

The three stages – with code that actually runs

1. Extract

The extract stage pulls data from source systems – APIs, databases, SaaS apps, files, event streams. Most teams build this layer with specialized connectors rather than custom code, because rate limits, pagination, schema drift, and auth refresh are tedious to maintain across hundreds of sources.

A minimal Python extract from a Postgres source using SQLAlchemy and pandas:

import pandas as pd

from sqlalchemy import create_engine

engine = create_engine("postgresql://user:pass@host:5432/source_db")

# Incremental extract using a watermark column

last_synced = "2026-05-01 00:00:00"

query = f"""

SELECT id, customer_id, amount, status, updated_at

FROM orders

WHERE updated_at > '{last_synced}'

"""

df = pd.read_sql(query, engine)

print(f"Extracted {len(df)} rows since {last_synced}")

The watermark pattern (extract only rows changed since the last successful run) is the foundation of incremental loads. The alternative – full extracts every run – works for small tables but breaks down past a few million rows.

2. Transform

Transformation is where data gets cleaned, enriched, and reshaped. Typical operations: filtering, deduplication, joins, type casting, currency conversion, surrogate key generation, slowly-changing dimension handling. Modern teams write these as SQL complex transformations in the warehouse rather than in-memory in Python, because warehouses scale better for set-based operations.

-- Clean and standardize orders, deduplicate by id, latest record wins

WITH ranked AS (

SELECT

id,

customer_id,

amount,

UPPER(TRIM(status)) AS status,

CAST(amount AS NUMERIC(12,2)) AS amount_clean,

updated_at,

ROW_NUMBER() OVER (

PARTITION BY id ORDER BY updated_at DESC

) AS rn

FROM staging.orders_raw

WHERE amount IS NOT NULL

)

SELECT id, customer_id, status, amount_clean, updated_at

FROM ranked

WHERE rn = 1;

The most common transformation patterns: deduplication by primary key with a “latest wins” rule, type coercion, joining customer records across systems (a typical case for combining data from multiple sources), and applying business logic like territory assignment or revenue recognition rules.

3. Load

Loading writes the transformed data into the target. Two main patterns:

- Full load (truncate + insert): simple, idempotent, but expensive on large tables.

- Incremental load (upsert/MERGE): only writes new or changed rows. Faster, but requires reliable change detection (watermarks, CDC, or hash comparisons).

-- Snowflake/Postgres MERGE for incremental upsert

MERGE INTO analytics.orders AS tgt

USING staging.orders_clean AS src

ON tgt.id = src.id

WHEN MATCHED AND src.updated_at > tgt.updated_at THEN

UPDATE SET status = src.status,

amount = src.amount_clean,

updated_at = src.updated_at

WHEN NOT MATCHED THEN

INSERT (id, customer_id, status, amount, updated_at)

VALUES (src.id, src.customer_id, src.status, src.amount_clean, src.updated_at);

Targets are usually a cloud data warehouse – Snowflake, BigQuery, Redshift, Databricks, or Postgres. From there, BI tools, ML models, and reverse-ETL flows pick up the cleaned data for activation. Real-world data warehousing setups often combine a curated analytics layer with raw and staging zones to keep transformations debuggable.

ETL vs ELT vs reverse ETL – when to use which

Most modern stacks aren’t pure ETL or pure ELT – they’re hybrid. Sensitive PII gets masked in flight (ETL-style), bulk data lands raw and gets transformed in-warehouse (ELT-style), and a reverse-ETL layer activates the result back into operational tools.

Idempotency, CDC, and the properties of a reliable pipeline

The single most important property of an ETL pipeline is idempotency – running it twice on the same input produces the same output. Without it, retries corrupt data, backfills double-count revenue, and on-call engineers stop trusting the pipeline.

Five properties every production ETL pipeline needs

Change Data Capture (CDC) is how you keep loads incremental without missing updates. Instead of polling for changed rows, CDC reads the database’s transaction log directly – Postgres WAL, MySQL binlog, SQL Server CDC tables. The result is sub-minute latency with full fidelity on inserts, updates, and deletes. For sources without log access (most SaaS APIs), watermark-based incremental extracts are still the practical choice.

Orchestration – Airflow, Prefect, Dagster, and the modern alternatives

An orchestrator schedules pipeline runs, manages dependencies between tasks, retries failures, and surfaces what’s broken. Most teams pick one of four tools – or a managed platform that includes one.

The trend in 2026: small and mid-sized teams are shifting away from running their own orchestrator entirely. Operating Airflow at scale is a full-time job, and bundled platforms now cover 80% of the use cases without that overhead. Larger enterprises with hundreds of pipelines still benefit from a dedicated orchestrator, but the threshold has moved up.

ETL pipeline best practices for 2026

Validate at the source, not the target

Catching a schema break or a null spike inside the warehouse means downstream dashboards are already wrong. Run lightweight checks – row counts, schema diffs, freshness – at the extract stage and fail loudly before the load.

Store raw data before transforming it

Land extracted data into a raw zone untouched, then transform into staging and analytics layers. When a transform has a bug (and it will), you can replay from raw without re-extracting from the source – which is slower, rate-limited, and sometimes impossible after the fact.

Test transformations like application code

Unit tests for SQL transformations (dbt’s data tests, Dagster asset checks, Great Expectations suites) catch regressions before they hit production. The bar is the same as for backend code: every business rule has a test, every test runs in CI.

Monitor freshness, not just job success

A green Airflow DAG doesn’t mean the data is fresh – it means no exception was raised. Track table-level freshness (“orders.last_updated_at within the last 30 minutes”), volume anomalies, and null-rate spikes. Tools like Monte Carlo, Datafold, and built-in data quality and integrity checks make this routine.

Version everything – DAGs, SQL, configs

Pipeline definitions belong in Git, with the same review and CI discipline as application code. This is non-negotiable for reproducibility and rollback. More on ETL operational discipline covers the full review and deployment loop.

Document lineage automatically, not manually

Hand-maintained lineage docs go stale within weeks. Use a tool that derives lineage from your SQL (dbt, Dagster, OpenLineage, or platform-native catalogs) so it stays accurate without engineer effort. Reference architectures and a full implementation framework are useful starting points when introducing this discipline.

Common challenges and how to actually solve them

Batch, micro-batch, or streaming – picking the right cadence

Cadence is one of the highest-leverage architecture decisions in any ETL pipeline. Pick wrong and you either pay for compute you don’t need or miss SLAs that downstream teams quietly stop trusting.

The default in 2026 has shifted from “daily batch unless we really need real-time” to “hourly or micro-batch unless cost forces us to slow down.” Cloud warehouse compute has dropped enough, and freshness expectations have risen enough, that hourly is now table stakes for most analytics workloads. Sub-minute streaming remains a deliberate choice with deliberate operational cost – it’s not a default.

A reference pipeline – source to dashboard in production

To make this concrete, here’s the shape of a typical mid-sized B2B SaaS pipeline running in production today.

Reference architecture: SaaS analytics pipeline

raw schema, partitioned by source and load timestamp.fct_revenue, dim_customer, fct_product_events. Joins, business rules, and grain decisions live here.This shape – three named layers (raw, staging, marts), incremental loads, automated tests, ownership tags – maps to roughly 80% of production setups in mid-market SaaS. Where it varies is the tooling: some teams use Fivetran + dbt + Airflow + Monte Carlo as four separate products; others run the whole thing on a bundled platform; others do half in-warehouse and half in Python. The architecture is the same; the operational footprint is what differs.

The AI era – how MCP and agents are reshaping ETL

Two specific changes are landing in 2026, beyond the general “AI helps you write SQL” line.

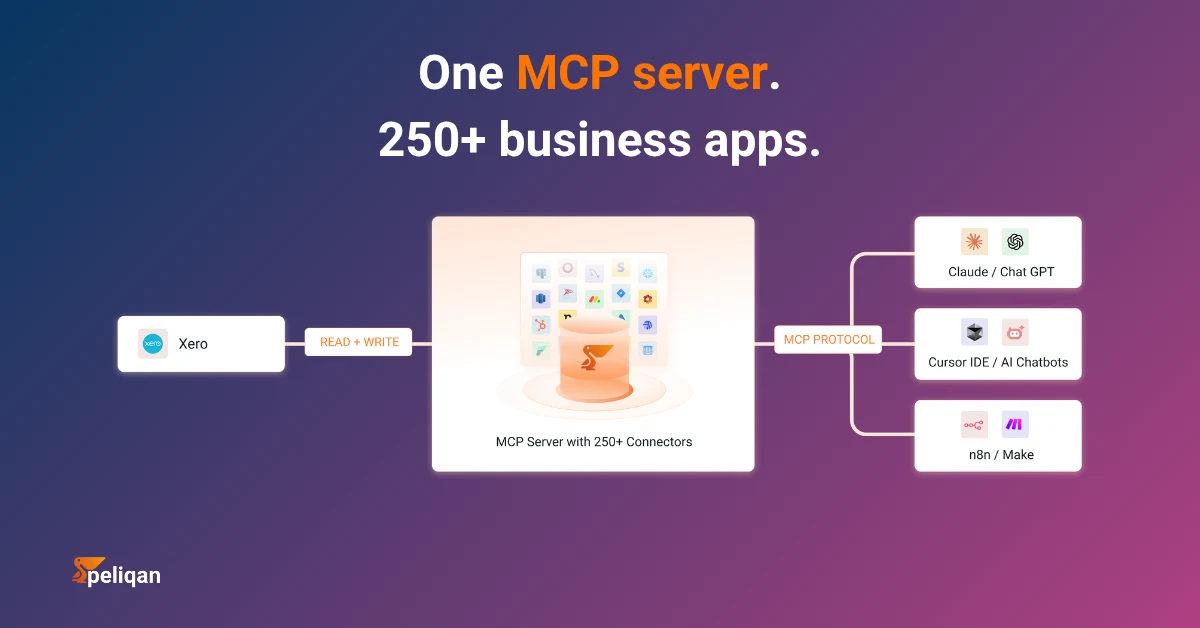

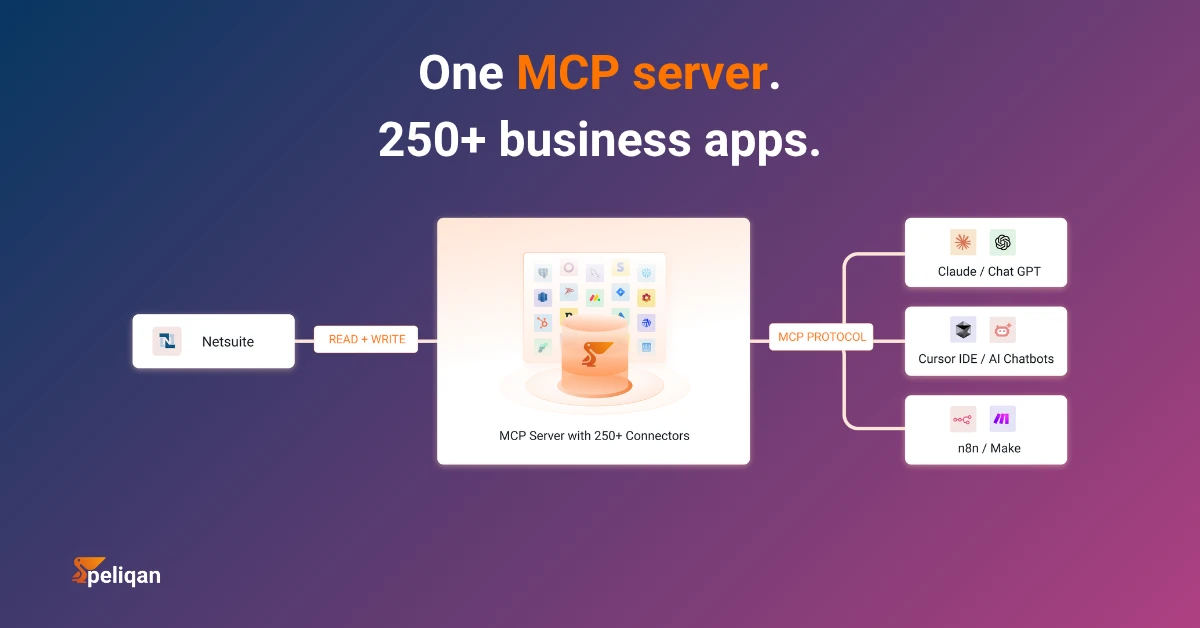

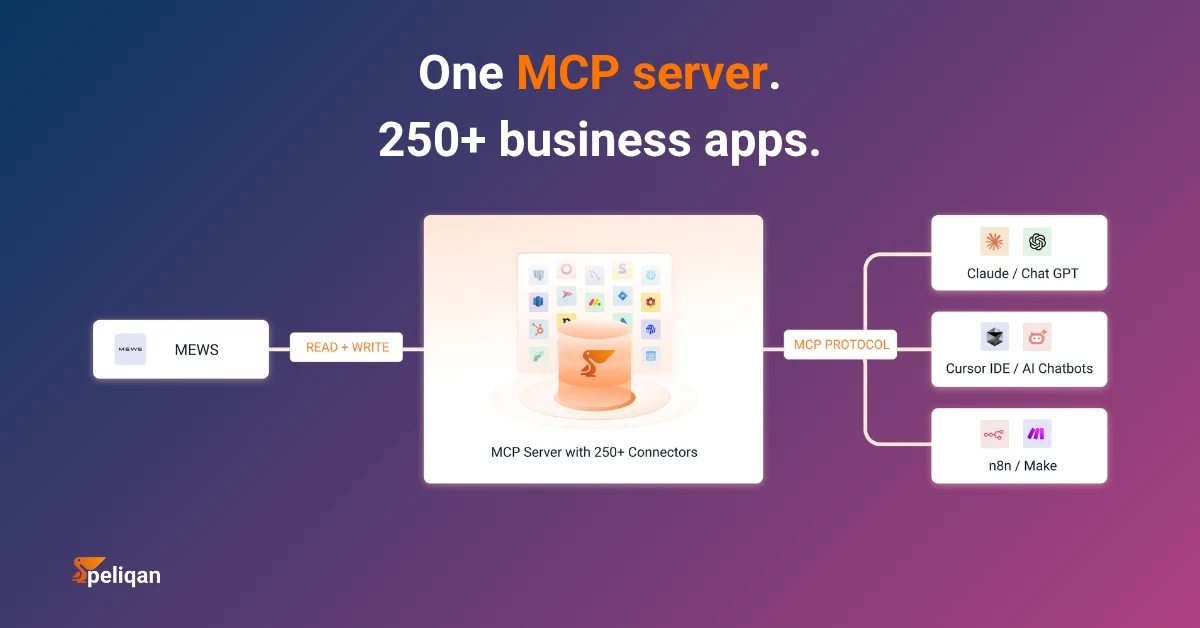

First, AI agents are now ETL consumers. Customer support agents, finance agents, and sales agents need live access to operational data to answer questions and take actions. The Model Context Protocol (MCP) gives agents a standard way to query warehouse and SaaS data on demand, which means ETL pipelines now serve two audiences: dashboards and agents. The freshness bar moves up – agents need data that’s minutes old, not hours.

Second, AI-assisted pipeline operations. LLMs are catching schema drift, suggesting transforms from sample data, classifying anomalies in monitoring streams, and writing the first draft of dbt models from natural-language prompts. This doesn’t remove the engineer – it removes the boilerplate. The judgment calls (what counts as duplicate, which definition of revenue is correct) still belong to humans.

The pipelines that will age well are the ones designed for both audiences from day one – clean schemas, fast freshness, granular access control, and an MCP-style query interface for agents.

ETL pipeline use cases worth highlighting

Business intelligence and analytics. The classic case. Data lands from CRM, finance, product, and ad platforms; gets joined into a unified fact model; powers dashboards. Most B2B SaaS companies start here and never leave.

Customer 360 and segmentation. Pulling customer interactions across email, support, product usage, and billing into a single view enables retention modeling and lifecycle marketing. This typically extends into reverse-ETL territory – pushing segments back into Salesforce, HubSpot, and ad platforms.

Financial consolidation. Multi-entity finance teams pull GL data from multiple ERPs (Exact, Yuki, NetSuite, SAP, Odoo), apply consolidation rules, and produce a single source of truth for reporting. The transform layer carries most of the complexity – currency conversion, intercompany eliminations, chart-of-accounts mapping. This is a strong use case for data integration platforms with prebuilt finance connectors.

SaaS product analytics. Event streams from product usage land in a warehouse alongside billing and CRM data. Cohort analyses, feature adoption, and PQL scoring all depend on this pipeline. Embedded analytics products often piggyback on the same flow, surfacing metrics back to end customers, which is well covered in SaaS-specific pipelines.

Compliance and audit. Regulations like NIS2, GDPR, and SOC 2 require traceable data lineage and access logs. ETL pipelines that capture lineage by design make audits manageable instead of frantic. Strong security controls at every stage – encryption at rest and in transit, RBAC, audit logs – are table stakes here.

Master data management. Resolving the same customer across systems (HubSpot ID, Stripe ID, Zendesk ID) and maintaining a canonical record. The transform layer handles the matching logic; the load layer publishes the resolved IDs back to source systems. This is a foundational layer for data management capabilities across the organization.

How Peliqan simplifies ETL pipelines

Most ETL projects fail not because the technology is wrong, but because the operational surface area is too large for the team running it. Connectors, orchestration, transforms, monitoring, and access control are usually four to six different tools – each with its own learning curve, billing model, and failure mode.

Peliqan consolidates that surface area into one platform. Extracts run through 250+ prebuilt connectors with a 48-hour SLA on custom requests. Transforms run as low-code SQL on anything or Python directly against the built-in Postgres/Trino warehouse. Reverse ETL, white-label embedding, multi-customer management, and SOC 2 Type II controls are included, not bolted on.

What you get out of the box

Real-world example: Vela Group

Vela Group, an investment firm, uses Peliqan to centralise data across their portfolio companies – consolidating financial reporting and operational metrics from each business into a single view that the investment team uses to monitor performance. The pipeline handles multiple ERPs, currencies, and reporting standards without a dedicated data engineering team. Read the full case study.

For teams already invested in Snowflake or BigQuery, Peliqan can act purely as the extract and orchestration layer, writing into the existing warehouse. For greenfield setups – especially in finance consulting, SaaS, ERP integration, and embedded analytics – using the built-in warehouse removes a meaningful chunk of operational overhead. The full ETL tools landscape has more on how the major players compare, and platform documentation walks through the specific implementation steps.

Conclusion

An ETL pipeline succeeds or fails on a small number of properties: idempotency, replayability, observability, and clear ownership. The tooling has consolidated, the transform layer has shifted into the warehouse for most teams, and AI agents are now legitimate downstream consumers alongside BI dashboards. The 2026 version of “build a good ETL pipeline” looks different from the 2018 version, but the fundamentals are unchanged – understand your sources, design for failure, test the transforms like code, and instrument everything.

The teams that ship reliable pipelines aren’t the ones with the most sophisticated stack. They’re the ones who picked an opinionated platform or set of tools, applied operational discipline, and resisted the urge to rebuild what they could rent.